Time-series forecasting is ubiquitous in numerous domains, similar to retail, finance, manufacturing, healthcare and pure sciences. In retail use instances, for instance, it has been noticed that improving demand forecasting accuracy can meaningfully cut back stock prices and improve income. Deep studying (DL) fashions have emerged as a well-liked method for forecasting wealthy, multivariate, time-series information as a result of they’ve confirmed to carry out properly in quite a lot of settings (e.g., DL fashions carried out properly within the M5 competition).

On the identical time, there was fast progress in massive basis language fashions used for pure language processing (NLP) duties, similar to translation, retrieval-augmented generation, and code completion. These fashions are skilled on huge quantities of textual information derived from quite a lot of sources like common crawl and open-source code that enables them to determine patterns in languages. This makes them very highly effective zero-shot instruments; as an example, when paired with retrieval, they will reply questions on and summarize present occasions.

Regardless of DL-based forecasters largely outperforming conventional strategies and progress being made in reducing training and inference costs, they face challenges: most DL architectures require long and involved training and validation cycles earlier than a buyer can check the mannequin on a brand new time-series. A basis mannequin for time-series forecasting, in distinction, can present first rate out-of-the-box forecasts on unseen time-series information with no extra coaching, enabling customers to deal with refining forecasts for the precise downstream job like retail demand planning.

To that finish, in “A decoder-only foundation model for time-series forecasting”, accepted at ICML 2024, we introduce TimesFM, a single forecasting mannequin pre-trained on a big time-series corpus of 100 billion actual world time-points. In comparison with the newest massive language fashions (LLMs), TimesFM is way smaller (200M parameters), but we present that even at such scales, its zero-shot efficiency on quite a lot of unseen datasets of various domains and temporal granularities come near the state-of-the-art supervised approaches skilled explicitly on these datasets. To entry the mannequin, please go to our HuggingFace and GitHub repos.

A decoder-only basis mannequin for time-series forecasting

LLMs are often skilled in a decoder-only trend that includes three steps. First, textual content is damaged down into subwords known as tokens. Then, the tokens are fed into stacked causal transformer layers that produce an output corresponding to every enter token (it can not attend to future tokens). Lastly, the output akin to the i-th token summarizes all the knowledge from earlier tokens and predicts the (i+1)-th token. Throughout inference, the LLM generates the output one token at a time. For instance, when prompted with “What’s the capital of France?”, it’d generate the token “The”, then situation on “What’s the capital of France? The” to generate the following token “capital” and so forth till it generates the entire reply: “The capital of France is Paris”.

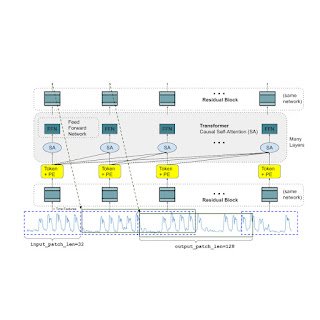

A basis mannequin for time-series forecasting ought to adapt to variable context (what we observe) and horizon (what we question the mannequin to forecast) lengths, whereas having sufficient capability to encode all patterns from a big pretraining dataset. Much like LLMs, we use stacked transformer layers (self-attention and feedforward layers) as the principle constructing blocks for the TimesFM mannequin. Within the context of time-series forecasting, we deal with a patch (a gaggle of contiguous time-points) as a token that was popularized by a current long-horizon forecasting work. The duty then is to forecast the (i+1)-th patch of time-points given the i-th output on the finish of the stacked transformer layers.

Nevertheless, there are a number of key variations from language fashions. Firstly, we’d like a multilayer perceptron block with residual connections to transform a patch of time-series right into a token that may be enter to the transformer layers together with positional encodings (PE). For that, we use a residual block much like our prior work in long-horizon forecasting. Secondly, on the different finish, an output token from the stacked transformer can be utilized to foretell an extended size of subsequent time-points than the enter patch size, i.e., the output patch size may be bigger than the enter patch size.

Take into account a time-series of size 512 time-points getting used to coach a TimesFM mannequin with enter patch size 32 and output patch size 128. Throughout coaching, the mannequin is concurrently skilled to make use of the primary 32 time-points to forecast the following 128 time-points, the primary 64 time-points to forecast time-points 65 to 192, the primary 96 time-points to forecast time-points 97 to 224 and so forth. Throughout inference, suppose the mannequin is given a brand new time-series of size 256 and tasked with forecasting the following 256 time-points into the long run. The mannequin will first generate the long run predictions for time-points 257 to 384, then situation on the preliminary 256 size enter plus the generated output to generate time-points 385 to 512. However, if in our mannequin the output patch size was equal to the enter patch size of 32 then for a similar job we must undergo eight era steps as an alternative of simply the 2 above. This will increase the probabilities of extra errors accumulating and due to this fact, in observe, we see {that a} longer output patch size yields higher efficiency for long-horizon forecasting

|

| TimesFM structure. |

Pretraining information

Similar to LLMs get higher with extra tokens, TimesFM requires a big quantity of authentic time sequence information to be taught and enhance. We have now spent an excellent period of time creating and assessing our coaching datasets, and the next is what we now have discovered works greatest:

Artificial information helps with the fundamentals. Significant artificial time-series information may be generated utilizing statistical fashions or bodily simulations. These fundamental temporal patterns can train the mannequin the grammar of time sequence forecasting.

Actual-world information provides real-world taste. We comb by way of obtainable public time sequence datasets, and selectively put collectively a big corpus of 100 billion time-points. Amongst these datasets there are Google Trends and Wikipedia Pageviews, which monitor what individuals are desirous about, and that properly mirrors developments and patterns in lots of different real-world time sequence. This helps TimesFM perceive the larger image and generalize higher when supplied with domain-specific contexts not seen throughout coaching.

Zero-shot analysis outcomes

We consider TimesFM zero-shot on information not seen throughout coaching utilizing common time-series benchmarks. We observe that TimesFM performs higher than most statistical strategies like ARIMA, ETS and may match or outperform highly effective DL fashions like DeepAR, PatchTST which were explicitly skilled on the goal time-series.

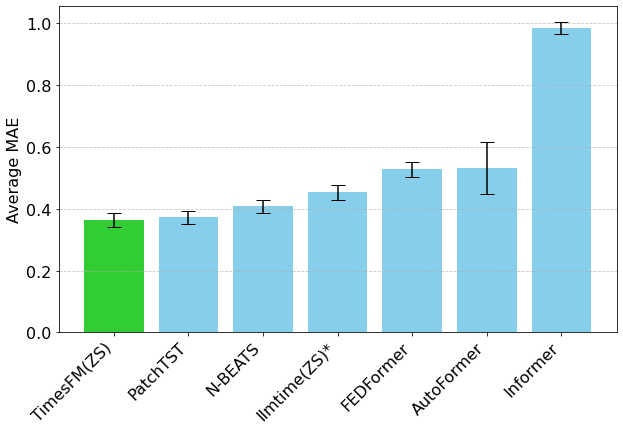

We used the Monash Forecasting Archive to judge TimesFM’s out-of-the-box efficiency. This archive comprises tens of hundreds of time-series from numerous domains like site visitors, climate, and demand forecasting overlaying frequencies starting from couple of minutes to yearly information. Following current literature, we examine the mean absolute error (MAE) appropriately scaled in order that it may be averaged throughout the datasets. We see that zero-shot (ZS) TimesFM is healthier than most supervised approaches, together with current deep studying fashions. We additionally evaluate TimesFM to GPT-3.5 for forecasting utilizing a particular prompting approach proposed by llmtime(ZS). We exhibit that TimesFM performs higher than llmtime(ZS) regardless of being orders of magnitude smaller.

|

| Geometric mean (GM, and why we do so) of Scaled MAE (the decrease the higher) of TimesFM(ZS) towards different supervised and zero-shot approaches on Monash datasets. |

Many of the Monash datasets are brief or medium horizon, i.e., the prediction size isn’t too lengthy. We additionally check TimesFM on common benchmarks for lengthy horizon forecasting towards a current state-of-the-art baseline PatchTST (and different long-horizon forecasting baselines). Within the subsequent determine, we plot the MAE on ETT datasets for the duty of predicting 96 and 192 time-points into the long run. The metric has been calculated on the final check window of every dataset (as finished by the llmtime paper). We see that TimesFM not solely surpasses the efficiency of llmtime(ZS) but additionally matches that of the supervised PatchTST mannequin explicitly skilled on the respective datasets.

|

| Final window MAE (the decrease the higher) of TimesFM(ZS) towards llmtime(ZS) and long-horizon forecasting baselines on ETT datasets. |

Conclusion

We practice a decoder-only basis mannequin for time-series forecasting utilizing a big pretraining corpus of 100B actual world time-points, the vast majority of which was search curiosity time-series information derived from Google Traits and pageviews from Wikipedia. We present that even a comparatively small 200M parameter pretrained mannequin that makes use of our TimesFM structure shows spectacular zero-shot efficiency on quite a lot of public benchmarks from totally different domains and granularities.

Acknowledgements

This work is the results of a collaboration between a number of people throughout Google Analysis and Google Cloud, together with (in alphabetical order): Abhimanyu Das, Weihao Kong, Andrew Leach, Mike Lawrence, Alex Martin, Rajat Sen, Yang Yang, Skander Hannachi, Ivan Kuznetsov and Yichen Zhou.