Machine studying fashions in the true world are sometimes skilled on restricted information that will comprise unintended statistical biases. For instance, within the CELEBA superstar picture dataset, a disproportionate variety of feminine celebrities have blond hair, resulting in classifiers incorrectly predicting “blond” because the hair colour for many feminine faces — right here, gender is a spurious function for predicting hair colour. Such unfair biases may have vital penalties in vital functions equivalent to medical diagnosis.

Surprisingly, latest work has additionally found an inherent tendency of deep networks to amplify such statistical biases, by the so-called simplicity bias of deep studying. This bias is the tendency of deep networks to determine weakly predictive options early within the coaching, and proceed to anchor on these options, failing to determine extra advanced and probably extra correct options.

With the above in thoughts, we suggest easy and efficient fixes to this twin problem of spurious options and ease bias by making use of early readouts and function forgetting. First, in “Using Early Readouts to Mediate Featural Bias in Distillation”, we present that making predictions from early layers of a deep community (known as “early readouts”) can mechanically sign points with the standard of the realized representations. Specifically, these predictions are extra usually fallacious, and extra confidently fallacious, when the community is counting on spurious options. We use this misguided confidence to enhance outcomes in model distillation, a setting the place a bigger “instructor” mannequin guides the coaching of a smaller “pupil” mannequin. Then in “Overcoming Simplicity Bias in Deep Networks using a Feature Sieve”, we intervene immediately on these indicator indicators by making the community “neglect” the problematic options and consequently search for higher, extra predictive options. This considerably improves the mannequin’s means to generalize to unseen domains in comparison with earlier approaches. Our AI Principles and our Responsible AI practices information how we analysis and develop these superior functions and assist us deal with the challenges posed by statistical biases.

|

| Animation evaluating hypothetical responses from two fashions skilled with and with out the function sieve. |

Early readouts for debiasing distillation

We first illustrate the diagnostic worth of early readouts and their software in debiased distillation, i.e., ensuring that the coed mannequin inherits the instructor mannequin’s resilience to function bias by distillation. We begin with a regular distillation framework the place the coed is skilled with a combination of label matching (minimizing the cross-entropy loss between pupil outputs and the ground-truth labels) and instructor matching (minimizing the KL divergence loss between pupil and instructor outputs for any given enter).

Suppose one trains a linear decoder, i.e., a small auxiliary neural community named as Aux, on prime of an intermediate illustration of the coed mannequin. We confer with the output of this linear decoder as an early readout of the community illustration. Our discovering is that early readouts make extra errors on cases that comprise spurious options, and additional, the boldness on these errors is larger than the boldness related to different errors. This implies that confidence on errors from early readouts is a reasonably robust, automated indicator of the mannequin’s dependence on probably spurious options.

We used this sign to modulate the contribution of the instructor within the distillation loss on a per-instance foundation, and located vital enhancements within the skilled pupil mannequin consequently.

We evaluated our method on normal benchmark datasets identified to comprise spurious correlations (Waterbirds, CelebA, CivilComments, MNLI). Every of those datasets comprise groupings of information that share an attribute probably correlated with the label in a spurious method. For example, the CelebA dataset talked about above consists of teams equivalent to {blond male, blond feminine, non-blond male, non-blond feminine}, with fashions sometimes performing the worst on the {non-blond feminine} group when predicting hair colour. Thus, a measure of mannequin efficiency is its worst group accuracy, i.e., the bottom accuracy amongst all identified teams current within the dataset. We improved the worst group accuracy of pupil fashions on all datasets; furthermore, we additionally improved general accuracy in three of the 4 datasets, displaying that our enchancment on anybody group doesn’t come on the expense of accuracy on different teams. Extra particulars can be found in our paper.

|

| Comparability of Worst Group Accuracies of various distillation strategies relative to that of the Instructor mannequin. Our technique outperforms different strategies on all datasets. |

Overcoming simplicity bias with a function sieve

In a second, carefully associated mission, we intervene immediately on the knowledge supplied by early readouts, to enhance feature learning and generalization. The workflow alternates between figuring out problematic options and erasing recognized options from the community. Our main speculation is that early options are extra vulnerable to simplicity bias, and that by erasing (“sieving”) these options, we enable richer function representations to be realized.

|

| Coaching workflow with function sieve. We alternate between figuring out problematic options (utilizing coaching iteration) and erasing them from the community (utilizing forgetting iteration). |

We describe the identification and erasure steps in additional element:

- Figuring out easy options: We practice the first mannequin and the readout mannequin (AUX above) in standard vogue through forward- and back-propagation. Observe that suggestions from the auxiliary layer doesn’t back-propagate to the primary community. That is to drive the auxiliary layer to study from already-available options relatively than create or reinforce them in the primary community.

- Making use of the function sieve: We intention to erase the recognized options within the early layers of the neural community with the usage of a novel forgetting loss, Lf , which is just the cross-entropy between the readout and a uniform distribution over labels. Basically, all info that results in nontrivial readouts are erased from the first community. On this step, the auxiliary community and higher layers of the primary community are stored unchanged.

We will management particularly how the function sieve is utilized to a given dataset by a small variety of configuration parameters. By altering the place and complexity of the auxiliary community, we management the complexity of the identified- and erased options. By modifying the blending of studying and forgetting steps, we management the diploma to which the mannequin is challenged to study extra advanced options. These selections, that are dataset-dependent, are made through hyperparameter search to maximise validation accuracy, a normal measure of generalization. Since we embrace “no-forgetting” (i.e., the baseline mannequin) within the search house, we look forward to finding settings which can be not less than nearly as good because the baseline.

Under we present options realized by the baseline mannequin (center row) and our mannequin (backside row) on two benchmark datasets — biased exercise recognition (BAR) and animal categorization (NICO). Function significance was estimated utilizing post-hoc gradient-based significance scoring (GRAD-CAM), with the orange-red finish of the spectrum indicating excessive significance, whereas green-blue signifies low significance. Proven beneath, our skilled fashions deal with the first object of curiosity, whereas the baseline mannequin tends to deal with background options which can be easier and spuriously correlated with the label.

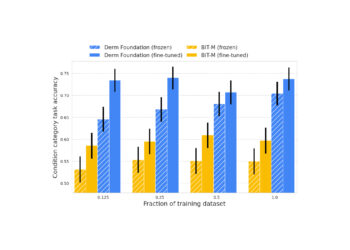

By way of this means to study higher, generalizable options, we present substantial beneficial properties over a spread of related baselines on real-world spurious function benchmark datasets: BAR, CelebA Hair, NICO and ImagenetA, by margins as much as 11% (see determine beneath). Extra particulars can be found in our paper.

|

| Our function sieve technique improves accuracy by vital margins relative to the closest baseline for a spread of function generalization benchmark datasets. |

Conclusion

We hope that our work on early readouts and their use in function sieving for generalization will each spur the event of a brand new class of adversarial function studying approaches and assist enhance the generalization functionality and robustness of deep studying techniques.

Acknowledgements

The work on making use of early readouts to debiasing distillation was carried out in collaboration with our tutorial companions Durga Sivasubramanian, Anmol Reddy and Prof. Ganesh Ramakrishnan at IIT Bombay. We lengthen our honest gratitude to Praneeth Netrapalli and Anshul Nasery for his or her suggestions and proposals. We’re additionally grateful to Nishant Jain, Shreyas Havaldar, Rachit Bansal, Kartikeya Badola, Amandeep Kaur and the entire cohort of pre-doctoral researchers at Google Analysis India for collaborating in analysis discussions. Particular due to Tom Small for creating the animation used on this put up.