Duty & Security

Exploring the promise and dangers of a future with extra succesful AI

Think about a future the place we work together repeatedly with a variety of superior synthetic intelligence (AI) assistants — and the place hundreds of thousands of assistants work together with one another on our behalf. These experiences and interactions might quickly grow to be a part of our on a regular basis actuality.

Basic-purpose basis fashions are paving the way in which for more and more superior AI assistants. Able to planning and performing a variety of actions consistent with an individual’s goals, they may add immense worth to folks’s lives and to society, serving as inventive companions, analysis analysts, academic tutors, life planners and extra.

They may additionally convey a couple of new section of human interplay with AI. Because of this it’s so vital to suppose proactively about what this world may appear like, and to assist steer accountable decision-making and useful outcomes forward of time.

Our new paper is the primary systematic remedy of the moral and societal questions that superior AI assistants increase for customers, builders and the societies they’re built-in into, and offers vital new insights into the potential influence of this know-how.

We cowl matters akin to worth alignment, security and misuse, the influence on the financial system, the setting, the knowledge sphere, entry and alternative and extra.

That is the results of one among our largest ethics foresight tasks thus far. Bringing collectively a variety of specialists, we examined and mapped the brand new technical and ethical panorama of a future populated by AI assistants, and characterised the alternatives and dangers society would possibly face. Right here we define a few of our key takeaways.

A profound influence on customers and society

Illustration of the potential for AI assistants to influence analysis, schooling, inventive duties and planning.

Superior AI assistants may have a profound influence on customers and society, and be built-in into most elements of individuals’s lives. For instance, folks might ask them to e-book holidays, handle social time or carry out different life duties. If deployed at scale, AI assistants may influence the way in which folks strategy work, schooling, inventive tasks, hobbies and social interplay.

Over time, AI assistants may additionally affect the objectives folks pursue and their path of private improvement via the knowledge and recommendation assistants give and the actions they take. In the end, this raises vital questions on how folks work together with this know-how and the way it can finest assist their objectives and aspirations.

Human alignment is important

Illustration displaying that AI assistants ought to be capable of perceive human preferences and values.

AI assistants will seemingly have a major stage of autonomy for planning and performing sequences of duties throughout a variety of domains. Due to this, AI assistants current novel challenges round security, alignment and misuse.

With extra autonomy comes larger danger of accidents attributable to unclear or misinterpreted directions, and larger danger of assistants taking actions which can be misaligned with the consumer’s values and pursuits.

Extra autonomous AI assistants might also allow high-impact types of misuse, like spreading misinformation or participating in cyber assaults. To deal with these potential dangers, we argue that limits have to be set on this know-how, and that the values of superior AI assistants should higher align to human values and be appropriate with wider societal beliefs and requirements.

Speaking in pure language

Illustration of an AI assistant and an individual speaking in a human-like means.

In a position to fluidly talk utilizing pure language, the written output and voices of superior AI assistants might grow to be exhausting to differentiate from these of people.

This improvement opens up a fancy set of questions round belief, privateness, anthropomorphism and acceptable human relationships with AI: How can we be certain customers can reliably determine AI assistants and keep in command of their interactions with them? What may be achieved to make sure customers aren’t unduly influenced or misled over time?

Safeguards, akin to these round privateness, have to be put in place to deal with these dangers. Importantly, folks’s relationships with AI assistants should protect the consumer’s autonomy, assist their capacity to flourish and never depend on emotional or materials dependence.

Cooperating and coordinating to fulfill human preferences

Illustration of how interactions between AI assistants and other people will create completely different community results.

If this know-how turns into extensively out there and deployed at scale, superior AI assistants might want to work together with one another, with customers and non-users alike. To assist keep away from collective motion issues, these assistants should be capable of cooperate efficiently.

For instance, 1000’s of assistants would possibly attempt to e-book the identical service for his or her customers on the similar time — doubtlessly crashing the system. In a really perfect state of affairs, these AI assistants would as a substitute coordinate on behalf of human customers and the service suppliers concerned to find frequent floor that higher meets completely different folks’s preferences and desires.

Given how helpful this know-how might grow to be, it’s additionally vital that nobody is excluded. AI assistants must be broadly accessible and designed with the wants of various customers and non-users in thoughts.

Extra evaluations and foresight are wanted

Illustration of how evaluations on many ranges are vital for understanding AI assistants.

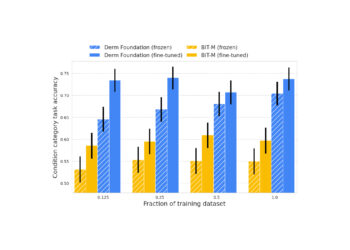

AI assistants may show novel capabilities and use instruments in new methods which can be difficult to foresee, making it exhausting to anticipate the dangers related to their deployment. To assist handle such dangers, we have to interact in foresight practices which can be based mostly on complete exams and evaluations.

Our earlier analysis on evaluating social and ethical risks from generative AI recognized among the gaps in conventional mannequin analysis strategies and we encourage far more analysis on this area.

For example, complete evaluations that tackle the consequences of each human-computer interactions and the broader results on society may assist researchers perceive how AI assistants work together with customers, non-users and society as a part of a broader community. In flip, these insights may inform higher mitigations and accountable decision-making.

Constructing the long run we wish

We could also be dealing with a brand new period of technological and societal transformation impressed by the event of superior AI assistants. The alternatives we make as we speak, as researchers, builders, policymakers and members of the general public will information how this know-how develops and is deployed throughout society.

We hope that our paper will perform as a springboard for additional coordination and cooperation to collectively form the type of useful AI assistants we’d all prefer to see on the planet.