Australian online regulator eSafety has sent notices to the makers of some of the world’s most popular online games, over concerns that the platforms are being used by sexual predators to groom children and extremists to radicalise them.

The legally enforceable transparency reporting notices require Roblox, Fortnite, Minecraft and Steam to explain how they are identifying, preventing and responding to grooming, sexual extortion and radicalisation issues, as well as cyberbullying and online hate

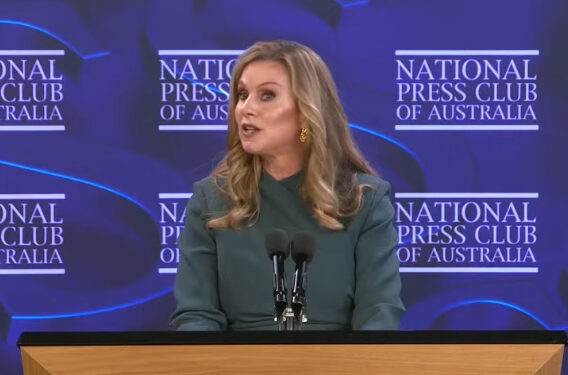

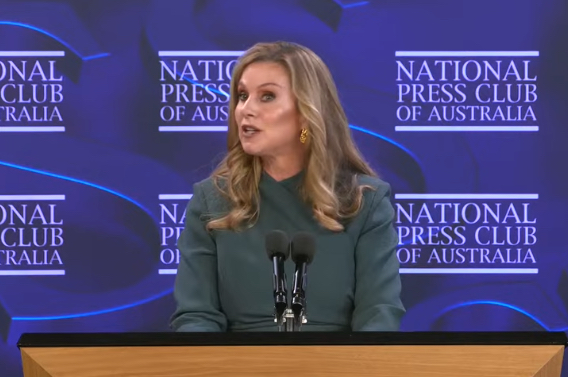

eSafety Commissioner Julie Inman Grant wants to know how closely their systems align with the government’s Basic Online Safety Expectations.

“What we often see after these offenders make contact with children in online game environments, they then move children to private messaging services,” she said

“Gaming platforms are amongst the online spaces most heavily used by Australian children, functioning not only as places to play, but also as places to socialise and communicate.

“Predatory adults know this and target children through grooming or embedding terrorist and violent extremist narratives in gameplay, increasing the risks of contact offending, radicalisation and other off-platform harms.”

90% of kids game online

eSafety research into children and gaming found that around 90% of Australian kids aged 8 to 17 played online games. Inman Grant said there’s been multiple reports of grooming on all four of these platforms as well as terrorist and violent extremist-themed gameplay.

“This includes Islamic State-inspired games and recreations of mass shootings on Roblox, as well as far right groups recreating fascist imagery in Minecraft,” she said.

“These companies must take meaningful steps to prevent their services becoming onramps to abuse, extremist violence, radicalisation or lifelong harm.”

Compliance with a transparency reporting notice is mandatory. If companies fail to respond, eSafety can seek financial penalties of up to $825,000 a day.

Companies found in breach of a direction to comply with a code such as the Online Safety Codes and Standards face penalties of up to $49.5 million per breach.

Earlier this year Roblox pledged to make multiple key changes to protect children, including more stringent age assurance, making accounts for under 16s private by default, and introducing tools to prevent adult users from contacting under 16s without parental consent.

eSafety said it will be testing the implementation of those commitments to validate their effectiveness.